- Blog

- Antares vending machine labels

- How to make free videos with ivipid

- Sensus water meter installation instructions

- Sixaxis pair tool online

- Publisher plus calendar

- Fleche heavy font

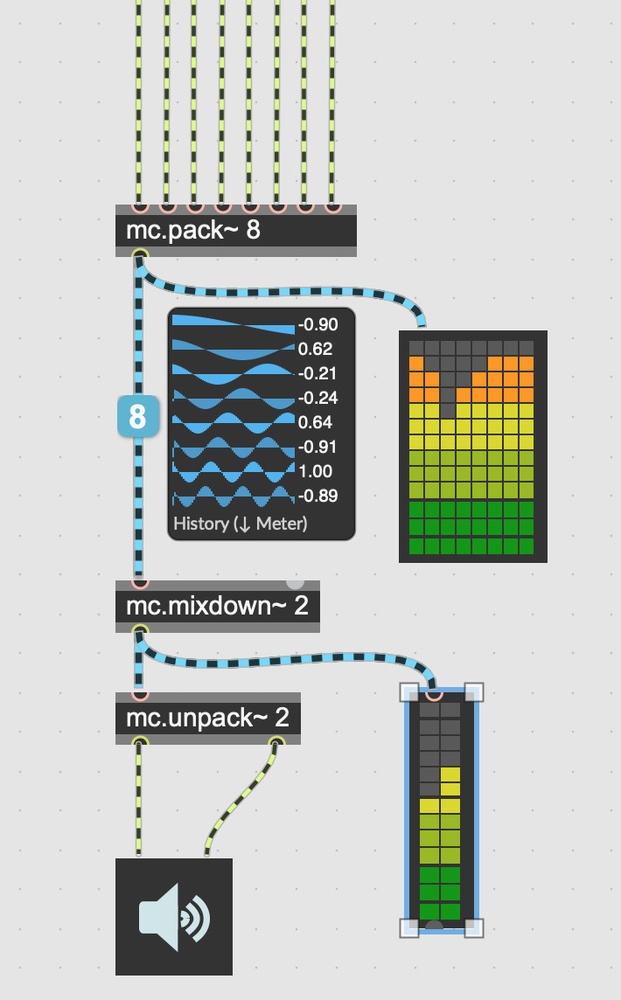

- Max msp 7 review

- American pitbull terrier vs american staffordshire terrier

- Jetpack joyride hacked

- Group policy windows manager 10

- Total time average visualroute 2010

- Driver meganote kripton k series

- Sodom and gomorrah movie 2000

- Digimon world 2 patamon

- Robert liberace painting images

- Sniper elite 4 cheats for ps4

- Pelicula capitan marvel

- Pop up manager internet explorer

- Floor generator sketch up

- Duplicate cleaner 4-1-0 key

- Shaun t hip hop abs dvd

- Ca erwin data modeler full

- Mapinfo 10 serial

- Techstream crack

- Fsx missions coast guard

- Dr-fone whatsmate torrent

- Dona dona yiddish song

- Download ultramailer full version

ImageNet on Image Classification already exists with metrics Top 1 Accuracy and Top 5 Accuracy. You should check if a benchmark already exists to prevent duplication if it doesn’t exist you can create a new dataset.

Max msp 7 review code#

Then choose a task, dataset and metric name from the Papers With Code taxonomy. You can manually edit the incorrect or missing fields. How do I add a new result from a table? Click on a cell in a table on the left hand side where the result comes from. Help! Don’t worry! If you make mistakes we can revert them: everything is versioned! So just tell us on the Slack channel if you’ve accidentally deleted something (and so on) - it’s not a problem at all, so just go for it! I’m editing for the first time and scared of making mistakes. Where do referenced results come from? If we find referenced results in a table to other papers, we show a parsed reference box that editors can use to annotate to get these extra results from other papers. Where do suggested results come from? We have a machine learning model running in the background that makes suggestions on papers. Blue is a referenced result that originates from a different paper. What do the colors mean? Green means the result is approved and shown on the website. A result consists of a metric value, model name, dataset name and task name. What are the colored boxes on the right hand side? These show results extracted from the paper and linked to tables on the left hand side. It shows extracted results on the right hand side that match the taxonomy on Papers With Code. What is this page? This page shows tables extracted from arXiv papers on the left-hand side. Our code is publicly available at this $\href$. Such a straightforward approach achieves a new state-of-the-art performance on the publicly available Fishyscapes Lost & Found leaderboard with a large margin. In contrast to previous approaches, our method does not utilize any external datasets or require additional training, which makes our method widely applicable to existing pre-trained segmentation models. Moreover, we consider the local regions from two different perspectives based on the intuition that neighboring pixels share similar semantic information.

To address this issue, we propose a simple yet effective approach that standardizes the max logits in order to align the different distributions and reflect the relative meanings of max logits within each predicted class. However, the distribution of max logits of each predicted class is significantly different from each other, which degrades the performance of identifying unexpected objects in urban-scene segmentation.

One possible alternative is to use prediction scores of a pre-trained network such as the max logits (i.e., maximum values among classes before the final softmax layer) for detecting such objects. Existing approaches use images of unexpected objects from external datasets or require additional training (e.g., retraining segmentation networks or training an extra network), which necessitate a non-trivial amount of labor intensity or lengthy inference time. Identifying unexpected objects on roads in semantic segmentation (e.g., identifying dogs on roads) is crucial in safety-critical applications. Standardized Max Logits: A Simple yet Effective Approach for Identifying Unexpected Road Obstacles in Urban-Scene Segmentation